Cooperative Multi-Agent Reinforcement Learning in Sparse-Reward, Partially Observable 3D Environments

- This blog explores how cooperative multi-agent reinforcement learning (MARL) can be improved in complex 3D environments where agents receive limited information and sparse rewards. The research adapts a single-agent algorithm (QMIX) for teamwork scenarios using a Portal 2-inspired simulation, where agents must coordinate actions to solve puzzles.

- To improve performance, several enhancements were introduced, including parallel experience collection, prioritised learning from important events, and memory-based learning using LSTM. A curriculum learning approach—starting with simple tasks and gradually increasing complexity—proved essential for success.

- The results show that agents can learn effective cooperation, even in challenging environments, and can transfer knowledge to new tasks.

- Overall, the study demonstrates that with the right training strategies, MARL systems can enable better coordination in real-world applications like robotics, autonomous vehicles, and simulation environments.

Reinforcement learning (RL) already outperforms elite players in board games and classic video games. Turning those solo successes into genuine team play is the next big step. Yet, many algorithms built for single-agent tasks break down when several agents must work together, especially in 3-D worlds where each player sees only a slice of the action.

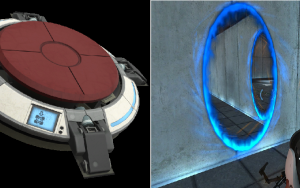

De Wet Denkema’s master’s research under supervision of Professor Herman Engelbrecht closes that gap. The research adapts recent single-agent advances for multi-task, cooperative multi-agent reinforcement learning (MARL) inside a Portal 2-inspired simulation. Two virtual robots must press buttons, create portals, and walk through them in the right sequence before time runs out. Sparse rewards, partial views, and strict ordering of subtasks make this a formidable test bench for teamwork.

Building the Testbed

The research evaluated available game engines, settled on a Portal 2 environment, and built a suite of puzzles that grow in complexity. Each scenario keeps the action space small—move, jump, place portal—yet demands long-range memory and joint planning. The setup supplies a challenging benchmark that mirrors real-world problems, such as warehouse robots sharing narrow aisles or drone fleets coordinating deliveries.

The core learner is QMIX, a value-based MARL algorithm. To raise its ceiling, the study added:

- Decoupled actor processes that gather experience in parallel, multiplying learning samples.

- Prioritised experience replay, so the network trains more often on rare but important memories.

- Recurrent burn-in to give the long short-term memory (LSTM) layer enough context before gradients flow.

- Reward standardisation and n-step returns to keep updates stable and informative.

Noisy networks for exploration and value-function rescaling were tested too, yet both options cut performance in every trial and were dropped. An exhaustive ablation confirmed that the remaining mix is required for success in this setting.