Breadcrumb

Evaluation Process

The Evaluation Cycle at Stellenbosch University

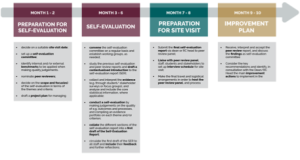

The quality assurance and enhancement cycle at Stellenbosch University is a structured, developmental process intended to strengthen organisational effectiveness, academic quality, and the student and staff experience. Each evaluation follows a ten-month timeline and includes preparation, evidence-based self-reflection, an external peer review, and the development of an improvement plan. Together, these stages support continuous enhancement across academic and professional support environments and ensure alignment with the University’s strategic priorities.

Purpose and Principles

The cycle is grounded in SU's commitment to responsible governance, institutional learning, and transformation. Evaluations encourage environments to reflect critically on their structures, culture, practices, programmes, and outcomes; to identify areas of strength and challenge; and to use evidence to make well-motivated quality judgements. The process is developmental rather than punitive, promoting honest engagement and sustainable improvement.

How the Cycle Works

The evaluation timeline guides environments through four key stages:

Environments plan the evaluation, establish self-evaluation structures, define the scope of reflection, and identify benchmarks and evidence sources. This stage lays the foundation for a coherent and well-coordinated process.

A comprehensive self-evaluation is conducted using evidence, institutional data, stakeholder feedback, and the Themes and Guiding Questions. A Self-Evaluation Report (SER) is drafted, refined, and approved for submission to the peer review panel.

Environments finalise the SER, liaise with peer reviewers, and make arrangements to host the site visit. The visit enables an external panel to validate the SER and engage with staff, students, and stakeholders.

Once the peer review report is received, environments identify improvement actions in consultation with the Dean or RC Head. These actions form the improvement plan submitted to the Quality Committee.

Two years after the evaluation, environments submit a follow-up report demonstrating progress on the agreed improvement actions.

Supporting Resources

All required tools, guidelines, templates, policies, and frameworks for each stage of the evaluation cycle are available on the Policy and Resources page. These resources are organised according to the four phases of the evaluation process to support environments at the appropriate stage of their evaluation timeline.

Evidence and Institutional Learning

The evaluation process is evidence-driven.

Environments draw on multiple sources - including survey data, institutional core statistics, programme information, and stakeholder insights - to support reflection and decision-making. This evidence base enables environments to identify trends, analyse performance, and implement targeted improvements that support academic renewal and student success.

Governance and Quality Committee Reporting

The evaluation cycle feeds directly into the University’s governance schedule. A full set of reports is tabled at Quality Committee meetings to ensure institutional oversight, coherence, and alignment across faculties, responsibility centres, and support divisions.

Core Statistics

Dean's or Responsibility Centre Head's Responses

Department/Division Response Reports

Peer Review Reports

Self-Evaluation Reports

Two-year Follow-up Reports

Quality Committee Dates

Because the evaluation cycle links directly to the governance year, environments must plan submissions based on the annual Quality Committee agenda's close and meeting dates.

These dates are also published in the University's Almanac.

2026 Quality Committee Dates

Agenda Close Date | Quality Committee Meeting Date |

31 March 2026 | 24 April 2026, Friday 09H00-13H00 |

28 April 2026 | 22 May 2026, Friday 09H00-13H00 |

14 August 2026 | 4 September 2026, Friday 09H00-13H00 |

17 September 2026 | 13 October 2026, Tuesday 09H00-13H00 |

ATTRIBUTION: Image courtesy of Stellenbosch University

ATTRIBUTION: Graphic courtesy of Centre for Academic Planning and Quality Assurance, Stellenbosch University