Smarter Cooling for Mobile Network Sites: Reinforcement Learning for Energy-Efficient Thermal Management

- This project examined whether reinforcement learning could reduce the energy used to cool telecom containers without allowing internal temperatures to drift into unsafe ranges. The result was a simulation-based control system that combined a fitted thermal model with a Deep Q-Network agent and delivered lower energy use than conventional automatic control strategies in both humid and arid conditions.

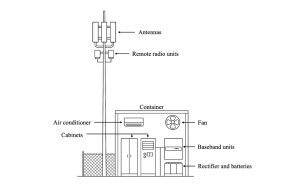

What looks like a simple telecom container is actually a dense, heat-generating technical space. Mobile network operator, or MNO, sites house equipment such as baseband units, rectifiers, batteries, and transmission hardware, all of which need stable operating conditions. If temperatures climb too high, equipment performance can drop, batteries can degrade faster, and the risk of failure increases.

In this project, Rynhardt du Plessis, under supervision of Professor Riaan Wolhuter and Dr Jaco du Toit, explored how reinforcement learning could be used to manage that heat more efficiently.

The starting point was a practical energy problem. Measurements from a Vodacom distributed antenna system site in Somerset West showed that cooling made up about 41% of total site energy use when one air conditioner was running. A single air conditioner drew about 1.6 kW, while the site’s total measured power use was about 3.9 kW. That made cooling a sensible place to look for savings.

Building a Thermal Model Before Training the Agent

Before any machine learning could begin, the project needed a reliable way to simulate how a container heats up and cools down. Du Plessis developed a simplified thermal model that captured the main heat-transfer processes involved: conduction, heat moving through solid materials; convection, heat transfer by moving air; and radiation, heat transfer via electromagnetic waves. The model also accounted for solar loading by using a solar proxy, which is a simplified stand-in for the heating effect of sunlight across the day.

This model treated the container interior as a lumped thermal mass, meaning the inside air was approximated as having one average temperature rather than many different hot and cold zones. That simplification made the simulation faster and easier to use for control testing. It also proved accurate enough for the job. After parameter fitting and validation against measured temperature data, the model reached a mean absolute error of about 0.50°C on the test set. In other words, its predicted internal temperature tracked the measured temperature closely enough to serve as a practical basis for control design.

Fig 1: System-level interaction diagram for a typical mobile network site.

Teaching the System When to Use the Fan or Air Conditioner

The control problem was framed as a reinforcement learning task. Reinforcement learning is a machine learning method in which an agent learns through repeated trial and error, improving its decisions based on rewards and penalties. In this case, the agent observed the internal and outside temperatures, the temperature error, and the previous action, then chose among four actions: off, fan, air conditioner, or air conditioner plus fan. A minimum switching delay of 30 minutes was built in to keep the behavior closer to real hardware operation.

The learning system used a Deep Q-Network, or DQN, which is a neural-network-based version of Q-learning. Its job was to reduce electricity use while keeping the internal temperature below the allowable threshold. The reward function penalized energy use and also penalised temperature violations. That matters, since cheap cooling is only useful if the equipment remains protected. Training was done in simulation, and the results showed stable convergence after roughly 400 to 600 episodes in both climate settings used in the study.

What the Results Showed in Two Climates

The learned controller was tested in two environments: Landroskop, representing a more humid climate, and Tankwa, representing a hotter, drier, more extreme climate. In Landroskop, the agent used 862.51 kWh and triggered only 4 alarms, compared with 2,360.29 kWh and 863 alarms for the current automatic air conditioner and fan strategy. In Tankwa, the agent used 4,110.09 kWh and triggered 114 alarms, compared with 5,663.63 kWh and 2,137 alarms for that same automatic strategy.

Those numbers are useful because they show the trade-off directly. The agent did not simply shut the equipment off and accept overheating. It kept temperatures near the target range while using less energy than the conventional automatic strategy.

Across both environments, its performance came close to the report’s Greedy controller, which served as a near-best benchmark. Just as important, the agent generalised from Landroskop to Tankwa without retraining, suggesting that the learned policy was not tied to a single narrow weather pattern.

From Simulation to Future Deployment

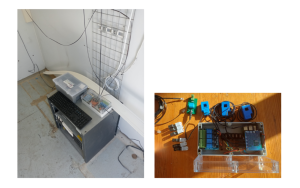

The project also included a hardware platform for measurement and future implementation. A Raspberry Pi 5 served as the main controller, reading sensors, logging data, and managing communication among components. DHT22 sensors were used to record temperature and humidity inside and outside the container, while current transformers were added to measure electrical draw from the cooling equipment. The hardware was installed and tested for measurement, although the cooling elements themselves could not be fully controlled on-site before the end of the project due to delays in installation.

That detail matters. This wasn’t presented as a fully deployed field control system. It was a measured, simulation-first study with a hardware foundation in place for future implementation. That makes the work more credible, not less. It shows a clear path from physical measurement to thermal modeling, to control design, to later real-world testing.

Fig 2: Hardware setup used for temperature and power measurements.

Final Thoughts

What this project shows is that a relatively compact control system can make better cooling decisions than a fixed rule-based strategy when conditions change across the day. By combining heat-transfer modeling with reinforcement learning, the work offers a practical route toward lower-energy thermal management for telecom infrastructure. It also leaves room for the next round of refinement, including richer thermodynamic modeling, direct wind and solar measurements, and on-site control testing. For a final-year engineering project, that’s a solid piece of work with a clear technical purpose and a direct industry link.